Agent Harness

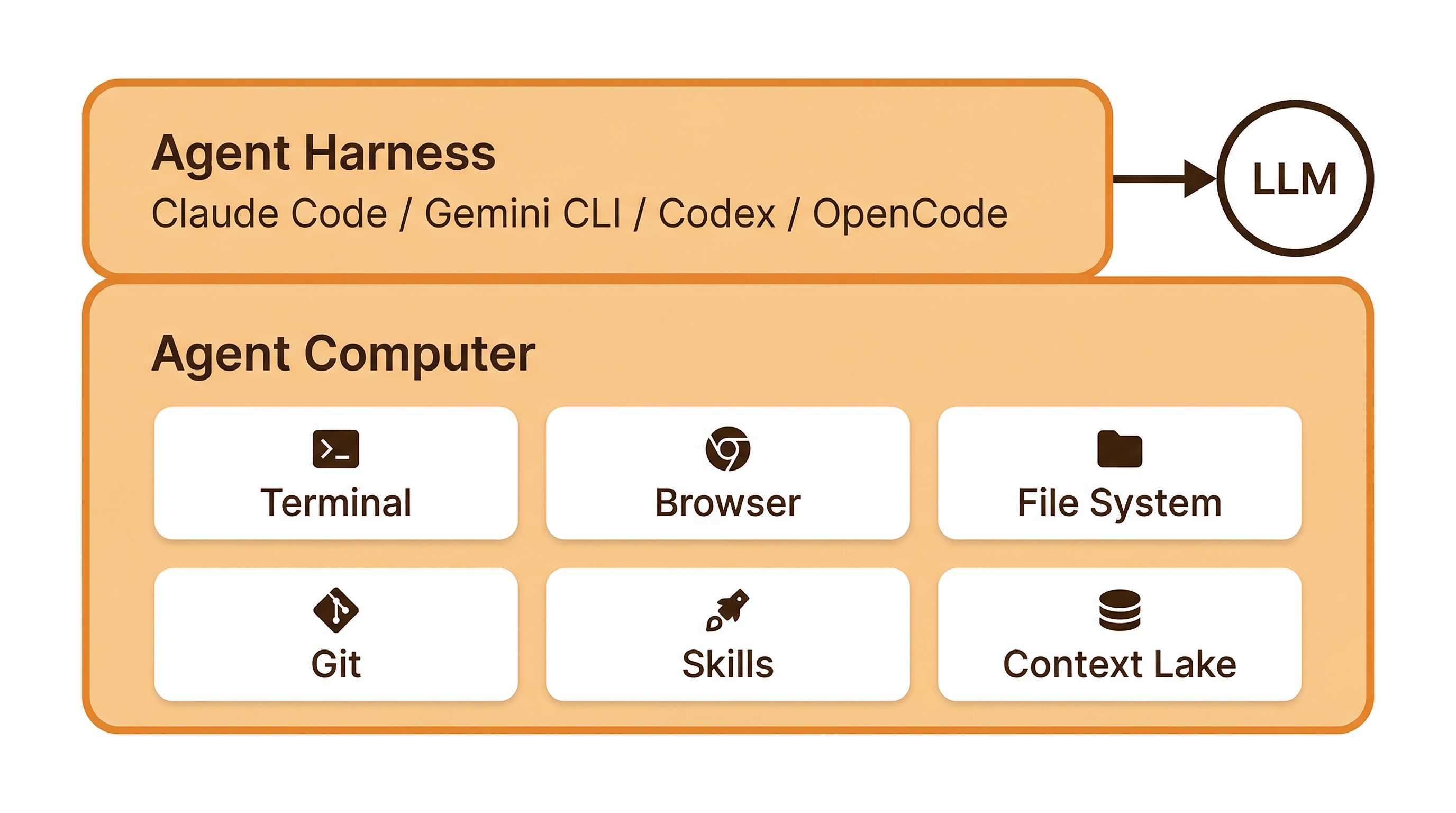

Every Agent Computer is a persistent cloud machine with a file system, terminal, browser, and installed skills. The agent harness is the AI layer that runs on top of this machine. It receives your task prompt, calls a language model for reasoning, and uses the computer’s tools to do the work.

The harness is swappable — you can run Claude Code on a task, then switch to Gemini CLI for the next one. The computer doesn’t care which agent runs on it. Files, git state, installed tools, and browser sessions persist across harness changes.

Executors

Section titled “Executors”Each harness instance runs one executor — the specific AI coding agent that drives the work.

| Executor | Agent | Description |

|---|---|---|

claude | Claude Code | Anthropic’s coding agent. Default executor. |

gemini | Gemini CLI | Google’s coding agent. Auto-routes between flash and pro models. |

codex | Codex | OpenAI’s coding agent. |

opencode | OpenCode | Open-source multi-provider agent. |

Every executor has full access to the same set of tools provided by the Agent Computer:

| Tool | What it does |

|---|---|

| Terminal | Full shell access. Install packages, run builds, execute scripts. |

| File system | Read, write, create, delete files. Persistent across tasks. |

| Git | Clone repos, create branches, commit, push. GitHub integration built-in. |

| Browser | Full remote Chrome instance. Navigate, interact, take screenshots. Not headless. |

| Skills | Invoke installed skills — team-private, built-in, or public. |

| Agent Context | Query your organization’s connected data sources with SQL. |

The difference between executors is which language model powers the reasoning — the tools they can use are identical.

Model Routing

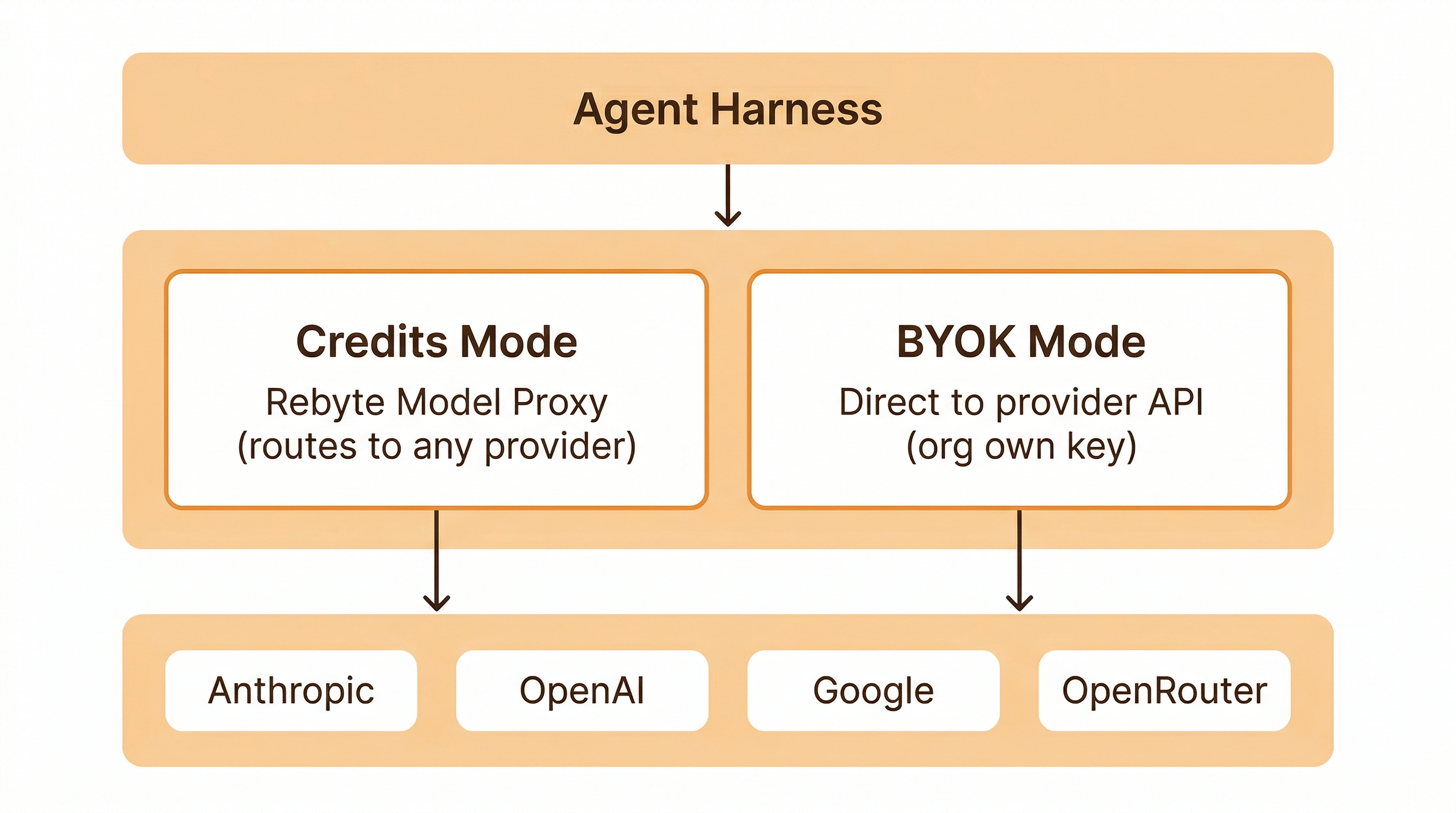

Section titled “Model Routing”When a task runs, the executor calls an LLM. How that call is routed depends on the auth mode — see Authentication for the full breakdown of credits, BYOK, and OAuth from the user’s perspective.

Credits mode — requests route through the Rebyte model proxy, which handles authentication with upstream providers. The claude executor can access models from any provider (Anthropic, OpenAI, Google, OpenRouter) through this proxy.

BYOK mode — requests go directly to the provider using the organization’s own API key. Each executor is restricted to its native provider’s models only (e.g., claude with BYOK can only use Anthropic models).

OAuth mode — available for Claude Code and Gemini CLI only. Each member connects their personal account; traffic goes direct to the provider, paid by the member’s own subscription. No credits consumed, no org API key shared.

Available Models

Section titled “Available Models”| Model | Provider | Executors |

|---|---|---|

claude-opus-4.6 | Anthropic | claude, opencode |

claude-sonnet-4.6 | Anthropic | claude, opencode |

gpt-5.4 | OpenAI | claude, codex, opencode |

gpt-5.3-codex | OpenAI | claude, codex, opencode |

gemini-3.1-pro | claude, opencode | |

gemini-3-flash | claude, opencode | |

auto-gemini-3 | gemini | |

minimax-m2.7 | Open Source | claude, opencode |

kimi-k2.5 | Open Source | claude, opencode |

glm-5 | Open Source | claude, opencode |

Open Source models are routed via OpenRouter. All other models route directly to their provider’s API.

Defaults

Section titled “Defaults”| Executor | Default model |

|---|---|

claude | claude-sonnet-4.6 |

codex | gpt-5.4 |

opencode | gemini-3.1-pro |

gemini | auto-gemini-3 |

Users can override the model per task.

Admin Controls

Section titled “Admin Controls”Organization admins manage executor and model access through Settings > Integrations > Code Agents.

Per-executor policies

Section titled “Per-executor policies”| Setting | Effect |

|---|---|

enabled | Enable/disable an executor for the entire org |

authMethod | Pick one of credits, api_key (BYOK), or oauth per executor — see Authentication |

disabledModels | Block specific models from being used |

BYOK (Bring Your Own Key)

Section titled “BYOK (Bring Your Own Key)”BYOK lets an organization route LLM requests directly to the provider using their own API keys.

- Admin stores an API key for an executor (

claude,codex, orgemini) in Settings > Integrations > Code Agents - When a team member runs a task, the agent authenticates directly with the provider using the org’s key

- No Rebyte credits are consumed — the org pays the provider directly

- If no BYOK key is configured, the task routes through the Rebyte model proxy and consumes credits

BYOK model restrictions

Section titled “BYOK model restrictions”With BYOK, each executor is restricted to its native provider’s models:

| Executor | Provider | BYOK models |

|---|---|---|

claude | Anthropic | claude-sonnet-4.6, claude-opus-4.6 |

codex | OpenAI | gpt-5.4, gpt-5.3-codex |

gemini | auto-gemini-3 |

opencode does not support BYOK — it routes through the model proxy only.

Without BYOK, the claude executor can access models from any provider through the model proxy. With BYOK, it is restricted to Anthropic models only, since the org’s Anthropic key cannot authenticate against other providers.